Researchers have used a machine-learning algorithm to decipher the seemingly inscrutable facial expressions of laboratory mice. The work could have implications for pinpointing neurons in the human brain that encode particular expressions.

Their study “is an important first step” in understanding some of the mysterious aspects of emotions and how they manifest in the brain, says neuroscientist David Anderson at the California Institute of Technology in Pasadena.

“I was fascinated by the fact that we humans have emotional states which we experience as feelings,” says neuroscientist Nadine Gogolla at the Max Planck Institute of Neurobiology in Martinsried, Germany, who led the three-year study. “I wanted to see if we could learn about how these states emerge in the brain from animal studies.” The work is published in Science1.

Gogolla took inspiration from a 2014 Cell paper2 that Anderson wrote with Ralph Adolphs, also at the California Institute of Technology. In the study, they theorized that ‘brain states’ such as emotions should exhibit particular characteristics — they should be persistent, for example, enduring for some time after the stimulus that evoked them has disappeared. And they should scale with the strength of the stimulus.

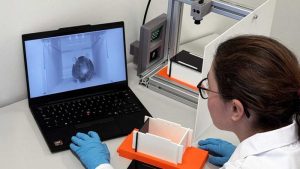

Gogolla’s team fixed the heads of mice to keep them still, then provided different sensory stimuli intended to trigger particular emotions, and filmed the animals’ faces. For example, the researchers placed either sweet or bitter fluids on the creatures’ lips to evoke pleasure or disgust. They also gave mice small but painful electric shocks to the tail, or injected the animals with lithium chloride to induce malaise.

The scientists knew that a mouse can change its expression by moving its ears, cheeks, nose and the upper parts of its eyes, but they couldn’t reliably assign the expressions to particular emotions. So they broke down the videos of facial-muscle movements into ultra-short snapshots as the animals responded to the different stimuli.

Machine-learning algorithms recognized distinct expressions, created by the movement of particular groups of facial muscles (see ‘Emotional animals’). These expressions correlated with the evoked emotional states, such as pleasure, disgust or fear. For example, a mouse experiencing pleasure pulls its nose down towards its mouth, and pulls its ears and jaw forwards. By contrast, when it is in pain, it pulls back its ears and bulks out its cheeks, and sometimes squints. The facial expressions had the characteristics that Anderson and Adolphs had proposed — for example, they were persistent and their strength correlated with the intensity of the stimulus.

Lire la suite: www.nature.com